MATH 251: Statistical and Machine Learning Classification

Instructor: Guangliang Chen

Course information [Syllabus]

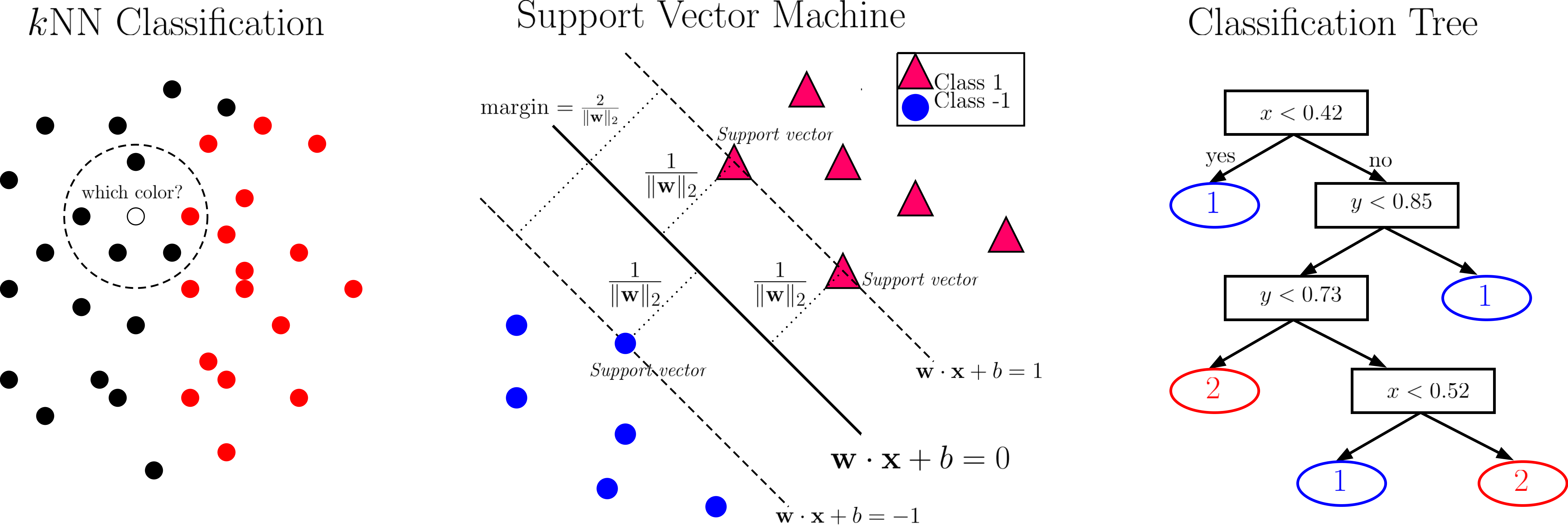

This is a graduate-level course on the machine learning branch of classification, covering the following topics:

- Instance-based Methods

- Discriminant Analysis

- Logistic Regression

- Support Vector Machine

- Kernel Methods

- Ensemble Methods

- Neural Networks

all based on the benchmark dataset of MNIST Handwritten Digits. Such a teaching strategy was partly inspired by Michael Nielsen's free online book - Neural Networks and Deep Learning, which notes explicitly that this dataset hits a ``sweet spot'' - it is challenging, but ``not so difficult as to require an extremely complicated solution, or tremendous computational power''. In addition, the digit recognition problem is very easy to understand, yet practically important.

Prerequisites: Math 164 and Math 250

Technology requirements:

- Zoom: Class meetings will be on Zoom [Click here to register].

- Canvas: Assignments and their grades will be posted in Canvas (accessible also via http://one.sjsu.edu/).

- Proctorio: Tests will be delivered via Proctorio.

- Piazza: This course will use Piazza as the bulletin board. Please post all course-related questions there.

Recommended readings:

- James, Witten, Hastie and Tibshirani (2017), “An Introduction to Statistical Learning with Applications in R”, Springer

- Hastie, Tibshirani, and Friedman (2009), “The Elements of Statistical Learning: Data Mining, Inference, and Prediction”, Springer-Verlag

- Nielson (2015), “Neural Networks and Deep Learning”, Determination Press

- Goodfellow, Bengio, and Courville (2016), “Deep Learning”, MIT Press

Course progress

| Lecture Slides | Further Reading | |

|---|---|---|

| 0 |

Introduction [slides] |

|

| 1 |

Instance-based classifiers [slides] |

Sections 2.2.3 and 5.1 of recommended reading 1 |

| 2 |

Dimension reduction for classification [slides] |

2DLDA paper |

| 3 |

Bayes classifiers [slides] |

Section 4.4 of recommended reading 1 |

| 4 |

Logistic regression [slides] |

Section 4.3 of recommended reading 1 |

| 5 |

Support vector machine [slides] |

[Chapter 9 of recommended reading 1] [Lagrange Dual] |

| 6 |

Evaluation criteria [slides] |

|

| 7 |

Ensemble learning [slides] |

[Trevor Hastie's slides] [Adele Cutler's lecture] [Chapter 8 of textbook] |

| 8 |

Neural networks [slides] |

[Michael Nielsen’s book] [Olga Veksler’s lecture] [Perceptron] |

| 9 |

Introduction to deep learning [slides] |

Standford CS 231n course page |

More learning resources

Useful course websites

- Prof. Veksler's CS9840a Learning and Computer Vision at University of Western Ontario

- Andrew Ng's CS 229 Machine Learning at Standford University

- Manik's CSL 864 - Special Topics in AI: Classification at Microsoft

Data sets

- USPS Zip Code Data

- UCI Machine Learning Repository

- LibSVM data sets

- Extended Yale Face Database B

- Oxford Flowers Category Datasets

Instructor feedback

Feedback at any time of the semester is encouraged and greatly appreciated, and will be seriously considered by the instructor for improving the course experience for both you and your classmates. Please submit your anonymous feedback through this page.